The biggest constraint on artificial intelligence is not chips, software, or capital. It is electricity. Now the tech giants are finally admitting it. Seven major companies, including Amazon, Google, Microsoft, Meta, OpenAI, Oracle, and xAI, have signed a pledge committing to supply or finance their own power generation for the massive AI data centers they are building. The agreement essentially states that these firms will build or purchase new electricity sources and pay for the infrastructure needed so that the exploding demand for AI computing does not drive up electricity costs for ordinary consumers.

Data centers were once a background piece of infrastructure. AI has changed that completely. The energy requirements of AI computing are on an entirely different scale. Analysts now say AI data centers consume an order of magnitude more power than traditional server warehouses because of the massive computing loads required to run advanced models.

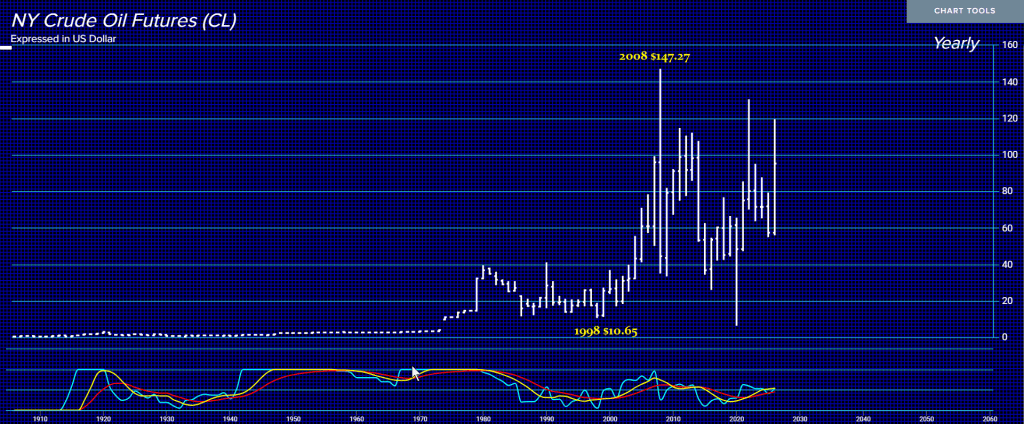

The numbers are staggering. U.S. data center electricity demand is expected to surge dramatically, reaching roughly 75.8 gigawatts in 2026 and potentially more than 134 gigawatts by 2030. Meanwhile, the Department of Energy estimates data centers could consume between 6.7% and 12% of all U.S. electricity by 2028. Global projections are even more dramatic, with data center electricity consumption potentially reaching hundreds of terawatt-hours annually as AI infrastructure expands worldwide.

Anyone who has followed my work already knows this problem was inevitable. I wrote previously that an electricity crisis was on the horizon precisely because governments were pursuing contradictory policies. They pushed electrification of everything from cars, heating systems, and industry while simultaneously shutting down reliable power generation and blocking new nuclear development. Then, suddenly, the world discovers AI requires an entirely new layer of energy infrastructure.

Even utilities are now warning that electricity demand is entering a new phase of rapid growth. After years of relatively flat consumption, U.S. power usage is expected to hit record levels in both 2026 and 2027, driven largely by AI data centers and the electrification of industry and transportation.

This is why the tech companies are suddenly pledging to build their own power sources. Local communities and utilities have begun pushing back against massive data center projects that could strain power grids and raise electricity costs for consumers. The pledge is essentially an attempt to reassure regulators and voters that the AI boom will not destabilize the energy system.

But this only highlights the deeper structural issue. Electricity infrastructure takes years or decades to build. AI demand is exploding now. The result is a growing gap between technological expansion and energy capacity. The irony is remarkable. Governments around the world spent years lecturing the public about reducing electricity consumption while simultaneously promoting industries that require exponentially more power. Artificial intelligence is not just a technological revolution, it is also an energy revolution.

If electricity supply does not expand dramatically, AI growth itself could hit a hard physical limit. The warning signs are already appearing. Tech companies are reopening nuclear plants, building dedicated power facilities, and now pledging to generate their own electricity simply to keep AI infrastructure running. When private companies begin building power plants to support their software, you know the system has reached a turning point.